A Meetup That Rewired How I Think About AI

I’m writing this live at the first Budapest Agentics Foundation meetup, taking notes in Claude Code on my phone. Coding a blog post from a meetup on a phone is exactly as ridiculous as it sounds. But it’s the most honest way to capture what’s being said here while it’s still fresh.

The Agentics Foundation is a volunteer-run community that’s been going for two years, spreading around the world. This was the first Budapest event. Thursday and Friday afternoons at 6pm there’s an open Zoom call where people present projects and discoveries. WhatsApp and Discord channels fill the gaps between sessions.

The headline talk came from Robert Sárosi, a growth engineer at Fireflies.ai and ex-startup founder. His topic: Scaling Growth with Agentic AI. What follows is my takeaways from his presentation, mixed with my own reflections as an indie maker.

From Note-Taking App to AI Employee

Fireflies.ai started as a meeting note-taking tool. Their goal was to unlock the knowledge buried inside conversations. What caught my attention is where they’ve ended up: 15 products, built without growing the team beyond roughly 100 people.

Shipping 15 products with 100 people means something fundamental has changed about how they work. According to Robert, it comes down to one principle:

If you have to do it two or three times, automate it.

Not “consider automating it.” Not “add it to the backlog.” Automate it. That’s the policy. They use AI tools and automations to ship and iterate fast. The automation isn’t an optimisation — it’s the operating model.

What Is Agentic AI, Actually?

Robert broke this down cleanly. Agentic AI is:

- Autonomous — it works independently

- Perceptive — it can understand its environment

- Reasoning — it can think through problems

- Planning — it can break work into steps

- Executing — it can carry out those steps

- Learning — it evaluates what happened and adjusts future plans

That last one is the key differentiator. Sound familiar? It’s the compounding system I built for ASO — except applied to everything.

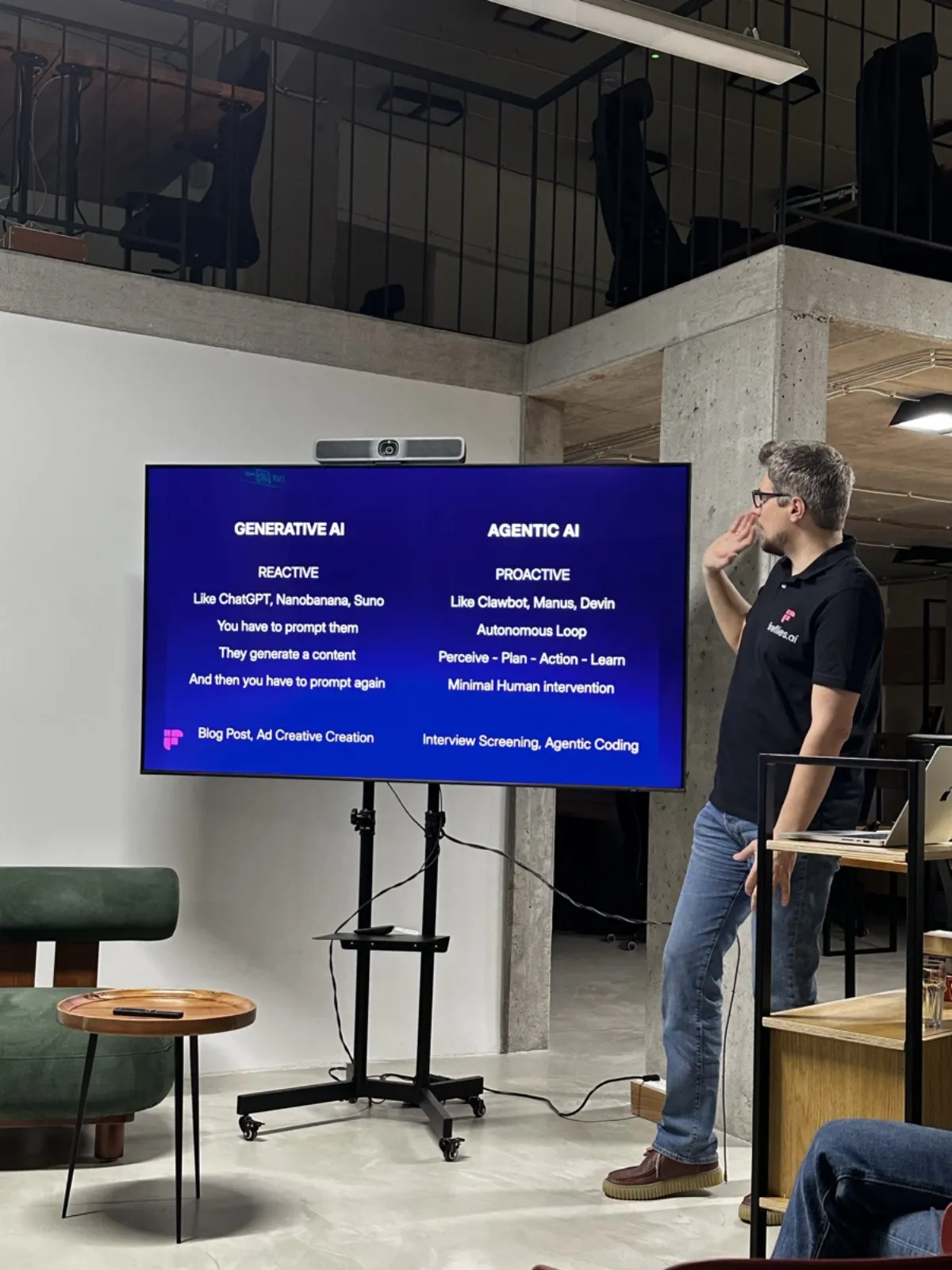

Generative AI is reactive. You prompt it, it responds. You’re always the initiator. Think blog posts, ad creative, one-off content. Agentic AI is proactive. It works in autonomous loops. Think interview screening, automated coding pipelines, systems that run while you sleep.

In the Loop vs On the Loop — The Agentic Engineering Shift

Robert drew a distinction between three modes:

Coding — you’re writing the code yourself. You’re in the loop, hands on keyboard.

Vibe coding — you don’t care about the code. You’re prompting and accepting whatever comes back. You’re still in the loop — still prompting, still steering — but you’ve let go of understanding every line.

Agentic engineering — AI is writing the code. But here’s the critical shift: you’re not in the loop. You’re on the loop. You work on the plan, hand it to the agent, and let it run to completion.

The difference isn’t philosophical. It’s about what scales. Coding scales with your time. Vibe coding scales a bit better but you’re still the bottleneck. Agentic engineering scales with tooling and context. The more you give it, the more it can do — independently.

That reframe — in the loop vs on the loop — is one I’ll be thinking about for a while.

The 70% Context Window Rule

Robert shared a practical framework for how the agentic loop should work. The agent runs the full cycle — perceive, plan, act, learn — inside a single context window. But here’s the discipline: before 70% of the context is used up, clear and start again. The learnings from that cycle get stored in long-term memory and applied to the next phase.

The context window isn’t unlimited. Pretending otherwise leads to degraded outputs. The system needs to know when to checkpoint, compress, and restart fresh.

But the learn phase goes deeper than “save and restart.” The agent needs both short-term and long-term memory. Short-term can live in markdown files — scratchpads, notes, context docs that exist for the duration of a project. Long-term learnings — patterns, rules, things that apply across projects — need something more durable. A local database, for example.

Most people skip this part. They build the loop but forget the memory. The agent learns something valuable in cycle three and has forgotten it by cycle five.

I’ve seen this firsthand. Long Claude sessions that run deep into context start drifting — forgetting earlier constraints, repeating themselves, losing the thread. Structured checkpointing keeps the quality high.

How Fireflies Actually Uses Agentic AI

Three real examples from inside the company:

- Performance assessment — quarterly performance reviews based on stories, GitHub activity, and Slack activity. Not vibes. Data.

- Growth Slack bot — answers questions about metrics, funnels, and features on demand. The kind of thing that normally means pinging three people across two timezones.

- Devin (coding agent) — automated coding that creates pull requests for manual review. The agent writes, a human reviews. On the loop, not in it.

Monitor Your Models — They Change Behind the Scenes

One practical takeaway I didn’t expect: build internal tools to monitor your models’ output quality. Anthropic, OpenAI, and the rest regularly tweak things behind the scenes. The version number doesn’t change, but behaviour can shift.

If you’re running models in production, you need to know when quality drifts. They also bake one line into their system prompts: “Do not make up numbers.” Simple, blunt, effective. Without it, models hallucinate metrics with total confidence.

Trust the Learn Phase

This was the point Robert kept coming back to. If your system has a proper learn phase, trust it. The system will figure out the problem. Not immediately. Not perfectly. But iteratively, compounding, getting closer with each cycle.

The temptation is to intervene early, tweak the prompts, override the agent’s conclusions. But if you’ve built the loop properly, the learn phase is doing the heavy lifting. Give it time. Give it data. Let it compound.

The people who get the least out of agentic systems are the ones who won’t let the system learn.

The Pitfalls Nobody Talks About (Robert’s Parting Shot)

Robert closed with a list of pitfalls that felt uncomfortably familiar:

- Overestimating capability — the model can do impressive things, but it’s not magic. Knowing its limits saves you from building on sand.

- Ambiguous goals and vague prompts — if you can’t articulate what you want clearly, the agent can’t deliver it. Garbage in, garbage out, at scale.

- Not spending enough time with it — you need to invest the hours to understand how the system behaves. There are no shortcuts.

- Oversteering — micromanaging the agent defeats the purpose. If you’re correcting every step, you’re coding with extra steps.

- Lack of context engineering — the agent is only as good as the context you give it. This is the part most people skip and then wonder why results are mediocre.

- Ignoring security concerns — autonomous systems with access to your codebase, data, and infrastructure need guardrails.

- Neglecting rigorous evaluation — if you’re not measuring whether the agent’s output is actually good, you’re just hoping.

- Lack of guardrails — related but distinct. The agent needs boundaries, not just goals.

Second Talk: Generative AI for Product Leaders — Viktor Nagy

The second talk came from Viktor Nagy, an econometrician turned tech leader who’s worked at GitLab and now leads product at Clearcisio, a property management system. His angle: less about engineering systems at scale and more about where you actually are on the AI adoption curve — and what practical AI use cases look like for product managers right now.

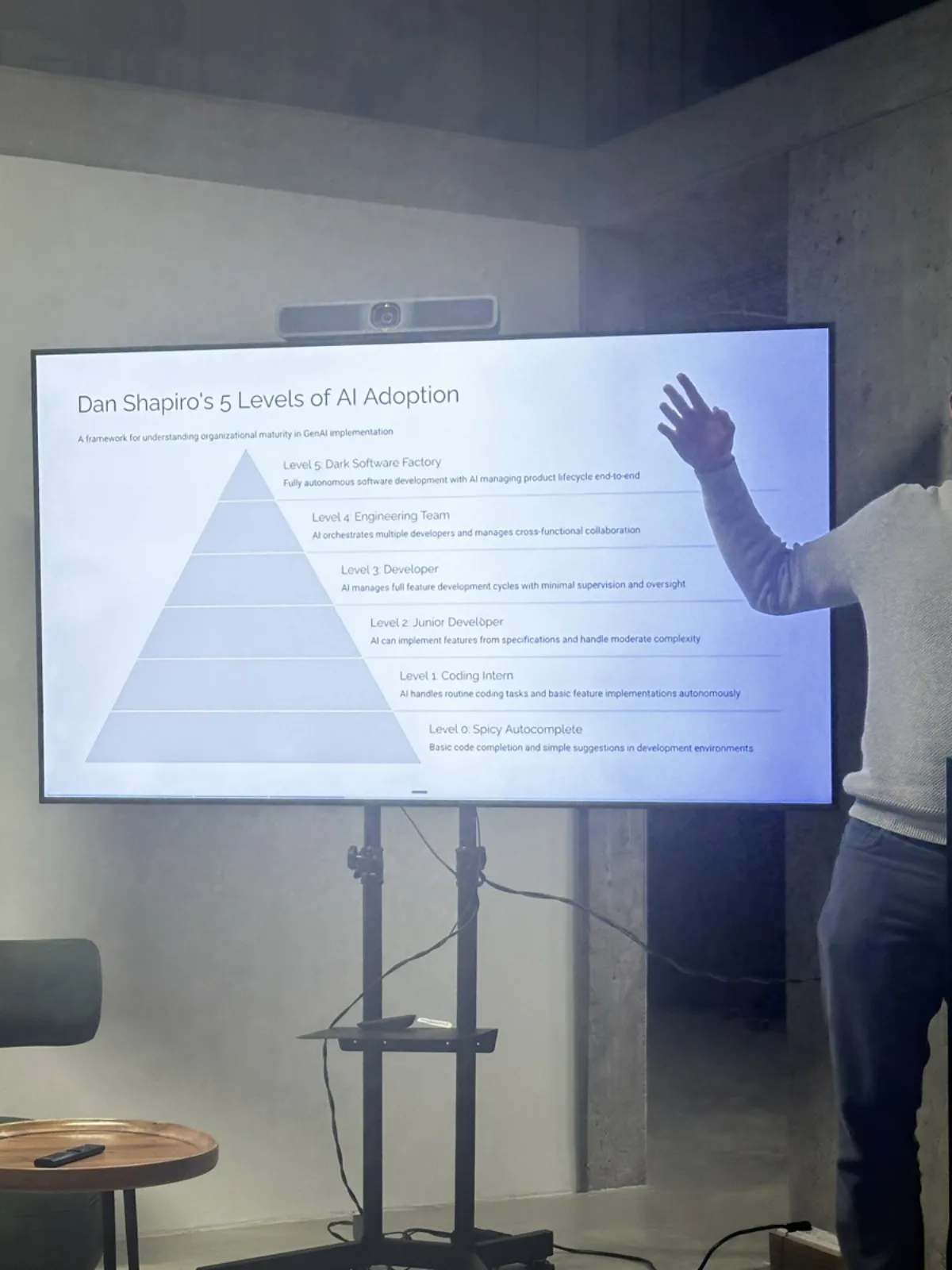

Dan Shapiro’s 5 Levels of AI Adoption

Viktor opened with Dan Shapiro’s framework for assessing AI adoption maturity. It’s a progression from basic usage through to full autonomous systems, and it’s worth being honest about where you sit.

Listening to Viktor walk through it, I’d place myself somewhere between level 3 and 4 — and until that moment, I thought that was pretty good. The framework made me realise there are one or two levels above me that are actually reachable, not theoretical. More importantly, it gave me a clear definition of what each level looks like, which means I now have something concrete to work towards instead of a vague sense of “do more with AI.”

What His Team Actually Ships With AI

What made Viktor’s talk valuable is that these weren’t hypothetical examples. These are things his team actually does, without a dedicated AI engineer on staff.

1. Quantitative insights with AI agents

They don’t have an AI engineer, so they hooked up an AI agent directly to their data and use it to aid analysis through Jupyter notebooks. Viktor said he started by reading every line of code the agent produced — reviewing it carefully, checking the logic. But over time, as the system matured, he’s built trust. He still reviews the data and analysis outputs, but he no longer audits every line of code. It’s a huge time saver.

That progression — from auditing everything to trusting the system while still reviewing outputs — maps perfectly to what Robert said about the learn phase. You build trust through iteration, not through hope.

2. Qualitative insights tracking

They document qualitative research in markdown files. Zoom transcripts get combined with handwritten notes, and AI gives structure to those combined inputs. It’s not replacing the researcher’s judgement — it’s pulling signal from messy, unstructured sources.

3. Targeted email writer

This one caught my attention. They use an agent to follow up with customers they’ve spoken to — but not with generic templates. The workflow is: read through all the customer notes, tell me which customers I should speak to about feature X and why, then write a personalised email for each one based on their specific notes and save it as a draft for review.

The result? Everybody replies. Not after two days of silence — actual, engaged replies.

That hit close to home. I have emails from StillMind users sitting in my inbox — people who raised concerns that I’ve since shipped fixes for. I should be reaching out to them. Viktor’s example was the nudge I needed.

4. Auto-translate documentation and generate release notes

This one lives entirely in GitLab. Documentation changes trigger automatic translations and release notes. Viktor shared the open source project behind it if you want to dig in.

What Keeps Him Up at Night

Viktor closed with something that resonated: the shift from a technology problem to a people problem. AI can do a lot now. The harder challenge is resistance — people who won’t adopt it, teams who don’t trust it, cultures that haven’t caught up with the capability.

The other thing keeping him up: how to reach level 4 and 5 with a legacy codebase. The adoption frameworks assume you can move fast. Legacy systems don’t. That tension is real, and it’s something a lot of product leaders are quietly wrestling with.

What I’m Taking Away

I’ve been building compounding AI systems for a few months. Hearing Robert and Viktor back to back — one from the engineering side, one from the product side — gave me something I didn’t expect: not just validation, but a clearer picture of the gaps in my own approach.

From Robert: the in the loop vs on the loop distinction. I’ve been moving in this direction — building systems that run independently, store learnings, and compound — but I hadn’t framed it this cleanly. Agentic engineering isn’t about prompting better. It’s about planning better, providing richer context, and getting out of the way. For me, that planning has since become spec-driven development: obsess over the spec, then let the agent build against it.

From Viktor, the takeaway is more personal. A product leader without an AI engineer on staff, using agents for analysis, research, and personalised outreach — it made me realise I’m leaving value on the table. The email example especially. I have users who gave me real feedback, and I now have features that address what they asked for. That’s a loop I should be closing.

Someone at the meetup also mentioned Pi.dev — worth a look if you’re building AI workflows.

If you’re interested in the Agentics Foundation community, they’re genuinely open and volunteer-run. No pitch, no paywall — just people building with AI and sharing what they learn.

Key Takeaways

- Fireflies.ai ships 15 products with ~100 people by treating automation as the operating model, not an optimisation.

- Agentic AI differs from generative AI in one critical way: it works in autonomous loops and learns from each cycle, rather than waiting for you to prompt it.

- The distinction between being in the loop (coding, vibe coding) and on the loop (agentic engineering) determines what scales.

- The 70% context window rule — checkpoint, compress, and restart before context degrades — keeps agent quality high across long tasks.

- Dan Shapiro’s 5 levels of AI adoption give you an honest framework for assessing where you are — and where you need to be.

- You don’t need an AI engineer to get real value from AI agents. Jupyter notebooks, markdown files, and clear prompts go a long way.

- The hardest AI adoption challenge isn’t technical — it’s cultural. Resistance to change and legacy codebases are the real blockers.

- Context engineering is the skill that separates mediocre AI results from compounding ones. The agent is only as good as what you give it.

If you’re building with agentic AI, I’d like to hear — what loop are you trying to close?